2025 marked a decisive turning point for artificial intelligence. After years of rapid growth, AI stopped being seen as a purely experimental or emerging technology and became a structural lever for businesses, products, and everyday workflows.

Throughout the year, adoption expanded rapidly. Models became increasingly multimodal, software could be built using natural language, early autonomous systems began to appear, and competition among major technology players intensified. At the same time, a more critical conversation emerged. Alongside enthusiasm and investment, concerns grew around inflated expectations, rising costs, and the risk that parts of the industry were moving faster than real value creation could justify.

This tension pushed companies and practitioners to ask more grounded questions. How much does AI actually cost to run at scale? Where does it truly create value? And, perhaps most importantly, how reliable and trustworthy can these systems be when they move from demos into real operations?

These questions did not slow innovation. In 2025, AI entered a more mature phase in which efficiency, reliability, sustainability, and economic viability became just as important as raw capability and model performance.

This shift sets the stage for 2026. If 2025 was about widespread adoption and testing boundaries, 2026 will be about selection and consolidation. Organizations will be forced to make clearer choices about which AI systems are worth deploying, which levels of autonomy are acceptable, and which investments can deliver measurable returns. The focus moves from experimenting with what is possible to deliberately building what is dependable, scalable, and accountable.

Understanding what defined 2025 is therefore essential to understanding 2026. The coming year will not be about more AI everywhere, but about better AI in the places where it truly matters.

The Lessons of 2025

Several defining patterns emerged over the course of 2025.

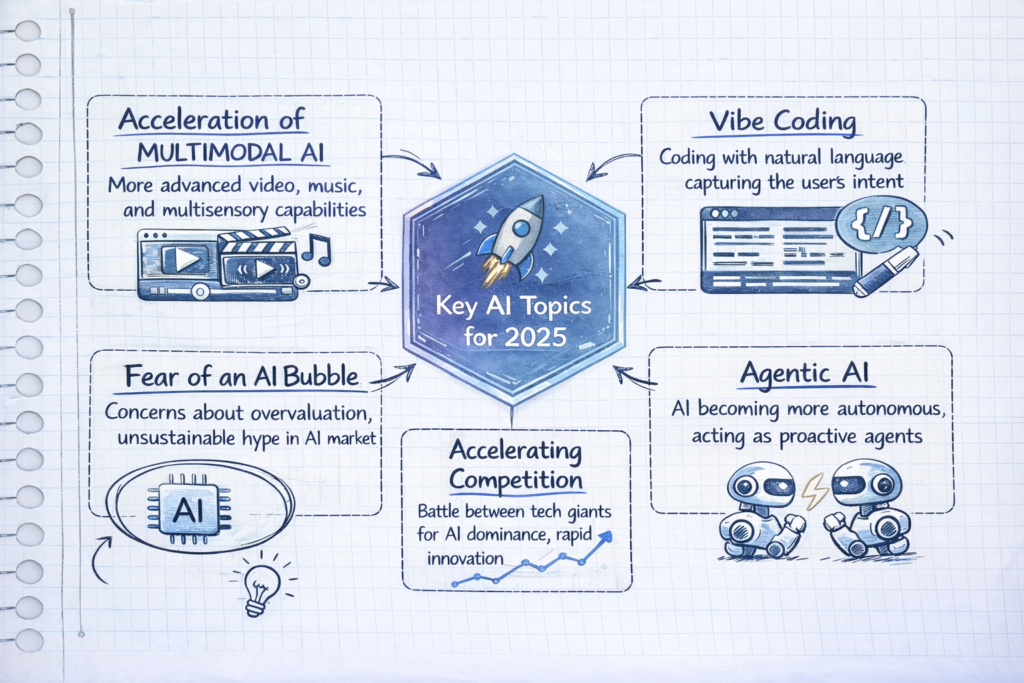

Multimodal AI moved from promise to practice. Systems capable of understanding and generating text, images, audio, and video unlocked new creative and operational possibilities. At the same time, this explosion of capability revealed how quickly costs and complexity could scale when models were pushed beyond controlled environments.

“Vibe coding” and language-driven development dramatically lowered the barrier to building software. But it also exposed new risks: brittle systems, hidden dependencies, and a growing gap between what could be built quickly and what could be maintained responsibly.

Early forms of agentic AI began to appear. Autonomous systems started handling tasks across tools and workflows, offering productivity gains but also raising concerns about oversight, error propagation, and accountability.

Finally, competition intensified. Major technology players raced to dominate infrastructure, models, and platforms. The result was rapid innovation but also growing anxiety about bubbles, overvaluation, and sustainability across the AI ecosystem.

By the end of 2025, it was clear that raw innovation alone was no longer enough. The industry had proven what was possible. The unresolved question was whether it could be made reliable, economical, and safe at scale.

2026 will be about choice

If 2025 was about expansion, 2026 will be about selection.

Organizations will no longer be rewarded for “using AI” in the abstract. They will be forced to make explicit decisions about where AI genuinely belongs in their products and processes and where it does not.

Six demands will increasingly define this new phase.

Trust and verification become foundational

As AI systems move deeper into decision-making, trust can no longer be assumed. Verification, provenance, monitoring, and auditability will become core requirements. Outputs must be explainable, traceable, and robust enough to withstand real-world scrutiny.

Accountable agentic AI replaces unchecked autonomy

Autonomy will not disappear, but it will be constrained. The focus will shift from “how autonomous can this system be?” to “under what boundaries should it operate?” Agentic AI in 2026 must be accountable (with clear human oversight), defined scopes of action, and mechanisms to intervene when things go wrong.

AI efficiency and ROI pressure intensify

The era of ignoring compute costs is ending. In 2026, efficiency becomes a competitive advantage. Companies will prioritize smaller, faster, cheaper models where they deliver comparable value, and demand measurable ROI from AI investments. Optimization will matter as much as innovation.

World models and situational AI gain importance

As systems take on more complex tasks, understanding context becomes critical. World models (AI that can reason about cause, effect, and environment) will be essential for reliability, especially in dynamic or high-stakes settings. Situational awareness will separate useful automation from dangerous shortcuts.

Market consolidation accelerates

Not every AI startup or platform will survive this shift. 2026 will likely see consolidation, with stronger players absorbing or replacing weaker ones. Buyers will prefer fewer, more reliable vendors over fragmented experimentation. Stability becomes a feature.

More AI-powered robots move into reality

Finally, AI will continue to escape the purely digital realm. More AI-powered robots (both physical and software-based) will appear in logistics, manufacturing, healthcare, and services. Their success will depend less on novelty and more on safety, reliability, and integration with existing systems.

From “More AI” to “Better AI”

The shift from 2025 to 2026 marks a change in priorities rather than pace. Innovation will continue, but the emphasis moves toward precision, discipline, and intent.

What 2025 exposed is that raw capability alone is not enough. Systems that scale without governance become fragile. Autonomy without clear limits creates risk. And technical excellence without economic grounding quickly loses relevance in real organizations.

In 2026, AI will increasingly be judged by different criteria: how reliably it behaves under pressure, how clearly responsibility is defined when things go wrong, and how consistently it delivers value relative to its cost. These dimensions will matter as much as model size or benchmark performance.

The year ahead favors AI that earns its place (embedded where it strengthens decisions, simplifies operations, and holds up over time). Progress will be measured less by how broadly AI is deployed, and more by how well it performs in the contexts that truly count.